Python Web Scraping: Solving CAPTCHAs Automatically

Build a Python web scraper that automatically solves CAPTCHAs using the requests library and a CAPTCHA solver API. Handles reCAPTCHA, hCaptcha, and Turnstile.

Python web scraping with automatic CAPTCHA solving lets you build scrapers that push through reCAPTCHA, hCaptcha, and Turnstile challenges without manual intervention. Python’s requests library handles HTTP communication, while a CAPTCHA solver API like uCaptcha takes care of the challenges your scraper encounters.

This guide walks through building a complete Python scraper that detects CAPTCHAs, solves them automatically, and continues extracting data. For a broader overview of CAPTCHA handling strategies across languages and tools, see the complete web scraping with CAPTCHA solving guide.

Prerequisites

Install the required packages:

pip install requests beautifulsoup4You’ll need a uCaptcha API key from ucaptcha.net. The API uses two endpoints:

POST https://api.ucaptcha.net/createTask— submit a CAPTCHA for solving.POST https://api.ucaptcha.net/getTaskResult— poll for the solution.

The CAPTCHA Solver Helper

Start by building a reusable solver class that wraps the uCaptcha API:

import time

import requests

class UCaptchaSolver:

def __init__(self, api_key: str):

self.api_key = api_key

self.base_url = "https://api.ucaptcha.net"

def create_task(self, task: dict) -> str:

response = requests.post(f"{self.base_url}/createTask", json={

"clientKey": self.api_key,

"task": task,

})

data = response.json()

if data.get("errorId"):

raise Exception(f"Task creation failed: {data['errorDescription']}")

return data["taskId"]

def wait_for_result(self, task_id: str, timeout: int = 120) -> dict:

elapsed = 0

while elapsed < timeout:

time.sleep(5)

elapsed += 5

response = requests.post(f"{self.base_url}/getTaskResult", json={

"clientKey": self.api_key,

"taskId": task_id,

})

data = response.json()

if data.get("errorId"):

raise Exception(f"Solve error: {data['errorDescription']}")

if data["status"] == "ready":

return data["solution"]

raise TimeoutError(f"CAPTCHA not solved within {timeout}s")

def solve(self, task: dict, timeout: int = 120) -> dict:

task_id = self.create_task(task)

return self.wait_for_result(task_id, timeout)This class handles the create-then-poll pattern in a single solve() call.

Detecting CAPTCHAs in HTML

Before solving, your scraper needs to identify which CAPTCHA is present. Use BeautifulSoup to parse the HTML and look for known markers:

import re

from bs4 import BeautifulSoup

def detect_captcha(html: str) -> dict | None:

soup = BeautifulSoup(html, "html.parser")

# reCAPTCHA v2

recaptcha_div = soup.find("div", class_="g-recaptcha")

if recaptcha_div and recaptcha_div.get("data-sitekey"):

return {

"type": "RecaptchaV2TaskProxyless",

"key": recaptcha_div["data-sitekey"],

"response_field": "g-recaptcha-response",

}

# hCaptcha

hcaptcha_div = soup.find("div", class_="h-captcha")

if hcaptcha_div and hcaptcha_div.get("data-sitekey"):

return {

"type": "HardCaptchaTaskProxyless",

"key": hcaptcha_div["data-sitekey"],

"response_field": "h-captcha-response",

}

# Cloudflare Turnstile

turnstile_div = soup.find("div", class_="cf-turnstile")

if turnstile_div and turnstile_div.get("data-sitekey"):

return {

"type": "TurnstileTaskProxyless",

"key": turnstile_div["data-sitekey"],

"response_field": "cf-turnstile-response",

}

# Check for reCAPTCHA script in source (invisible reCAPTCHA)

if "google.com/recaptcha" in html:

match = re.search(r'data-sitekey=["\']([a-zA-Z0-9_-]+)["\']', html)

if match:

return {

"type": "RecaptchaV2TaskProxyless",

"key": match.group(1),

"response_field": "g-recaptcha-response",

}

return NoneSolving reCAPTCHA v2: A Full Example

Here’s a complete scraper that navigates to a login page, detects reCAPTCHA v2, solves it, and submits the form:

from bs4 import BeautifulSoup

solver = UCaptchaSolver(api_key="YOUR_UCAPTCHA_KEY")

session = requests.Session()

session.headers.update({

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) "

"AppleWebKit/537.36 Chrome/131.0.0.0 Safari/537.36",

"Accept-Language": "en-US,en;q=0.9",

})

TARGET_URL = "https://example.com/login"

# Step 1: Load the page

response = session.get(TARGET_URL)

captcha_info = detect_captcha(response.text)

if captcha_info:

print(f"Detected {captcha_info['type']} with key {captcha_info['key']}")

# Step 2: Solve the CAPTCHA

solution = solver.solve({

"type": captcha_info["type"],

"websiteURL": TARGET_URL,

"websiteKey": captcha_info["key"],

})

token = solution["gRecaptchaResponse"]

print(f"Solved. Token: {token[:50]}...")

# Step 3: Submit the form with the token

soup = BeautifulSoup(response.text, "html.parser")

form = soup.find("form")

action = form.get("action", TARGET_URL)

form_data = {}

for input_tag in form.find_all("input"):

name = input_tag.get("name")

value = input_tag.get("value", "")

if name:

form_data[name] = value

# Add credentials and CAPTCHA token

form_data["username"] = "your_username"

form_data["password"] = "your_password"

form_data[captcha_info["response_field"]] = token

result = session.post(action, data=form_data)

print(f"Form submitted. Status: {result.status_code}")

else:

print("No CAPTCHA detected.")This pattern works for any site with a standard HTML form protected by reCAPTCHA v2.

Handling Multiple Pages

Real scrapers don’t stop at one page. Here’s how to build a scraper that processes a list of URLs, solving CAPTCHAs as needed:

import random

import time

def scrape_with_captcha_handling(urls: list[str]) -> list[dict]:

results = []

for url in urls:

# Polite delay

time.sleep(random.uniform(2, 5))

response = session.get(url)

captcha_info = detect_captcha(response.text)

if captcha_info:

solution = solver.solve({

"type": captcha_info["type"],

"websiteURL": url,

"websiteKey": captcha_info["key"],

})

# Re-request with solved token (depends on site implementation)

response = session.post(url, data={

captcha_info["response_field"]: solution["gRecaptchaResponse"],

})

# Parse the data you need

soup = BeautifulSoup(response.text, "html.parser")

title = soup.find("h1").get_text(strip=True) if soup.find("h1") else ""

results.append({"url": url, "title": title, "html": response.text})

return resultsAdd random delays between requests to mimic human browsing and reduce the chance of triggering additional CAPTCHAs.

Async Scraping for Better Performance

For larger scraping jobs, use aiohttp to send requests concurrently and solve multiple CAPTCHAs in parallel:

import asyncio

import aiohttp

async def solve_captcha_async(session: aiohttp.ClientSession, task: dict) -> dict:

async with session.post("https://api.ucaptcha.net/createTask", json={

"clientKey": "YOUR_UCAPTCHA_KEY",

"task": task,

}) as resp:

data = await resp.json()

task_id = data["taskId"]

for _ in range(30):

await asyncio.sleep(5)

async with session.post("https://api.ucaptcha.net/getTaskResult", json={

"clientKey": "YOUR_UCAPTCHA_KEY",

"taskId": task_id,

}) as resp:

result = await resp.json()

if result["status"] == "ready":

return result["solution"]

raise TimeoutError("CAPTCHA solve timed out")

async def scrape_urls(urls: list[str]):

async with aiohttp.ClientSession() as session:

tasks = [scrape_single(session, url) for url in urls]

return await asyncio.gather(*tasks, return_exceptions=True)This lets you solve multiple CAPTCHAs concurrently rather than waiting for each one sequentially.

Error Handling and Retries

Production scrapers need to handle failures gracefully:

def scrape_with_retries(url: str, max_retries: int = 3) -> str | None:

for attempt in range(max_retries):

try:

response = session.get(url, timeout=30)

captcha_info = detect_captcha(response.text)

if captcha_info:

solution = solver.solve({

"type": captcha_info["type"],

"websiteURL": url,

"websiteKey": captcha_info["key"],

})

response = session.post(url, data={

captcha_info["response_field"]: solution["gRecaptchaResponse"],

})

return response.text

except TimeoutError:

print(f"CAPTCHA solve timeout on attempt {attempt + 1}")

except requests.RequestException as e:

print(f"Request error on attempt {attempt + 1}: {e}")

time.sleep(2 ** attempt) # Exponential backoff

return NoneKey failure scenarios to handle:

- CAPTCHA solve timeout — the solver took too long. Retry with a fresh task.

- Network errors — connection drops, DNS failures. Retry with backoff.

- Invalid site key — the page structure changed. Log the error and skip.

- Token expiration — you waited too long between solving and submitting. Solve again immediately before form submission.

Tips for Python CAPTCHA Scraping

Reuse sessions. The requests.Session object maintains cookies across requests. After solving one CAPTCHA, the session cookies may grant you access to subsequent pages without additional challenges.

Set realistic headers. Always include a current User-Agent, Accept-Language, and other standard headers. Missing headers are a strong bot signal.

Use proxyless task types when possible. RecaptchaV2TaskProxyless is simpler and faster than proxy-based tasks. Only use proxy task types when the target site validates IP consistency between the CAPTCHA solve and form submission.

Monitor your CAPTCHA spend. Each solve costs money. Track how many CAPTCHAs you’re solving per session and optimize your request patterns to minimize triggers.

Conclusion

Python’s ecosystem makes it straightforward to build scrapers that handle CAPTCHAs automatically. The requests library handles HTTP, BeautifulSoup parses HTML for CAPTCHA detection, and uCaptcha’s API solves the challenges across multiple providers. For more complex scenarios involving JavaScript-rendered pages, consider combining this approach with a browser automation framework, or look at scaling up with a production scraping pipeline. uCaptcha routes your solve tasks across CapSolver, 2Captcha, AntiCaptcha, and more, so you get a single integration point with built-in redundancy.

Related Articles

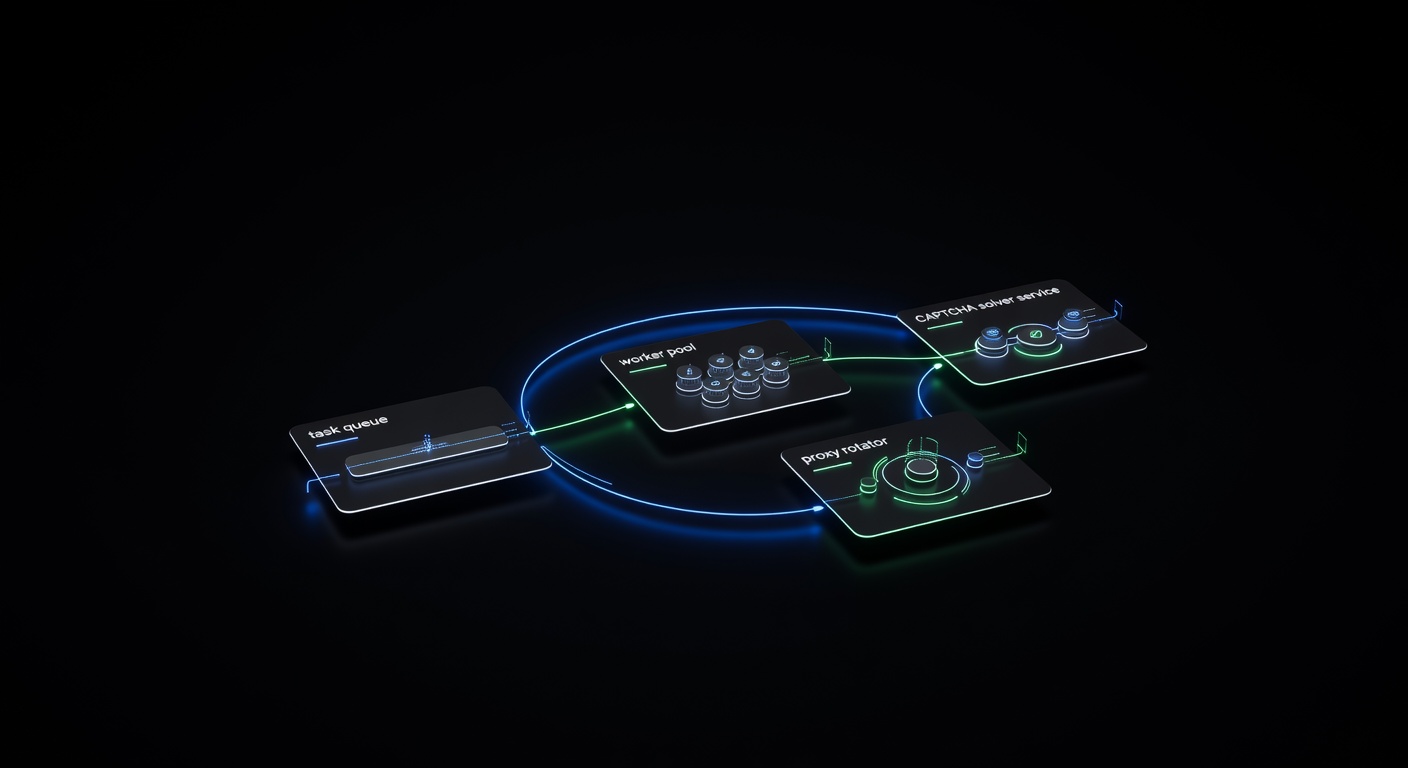

Building a Production CAPTCHA-Solving Scraping Pipeline

Architecture guide for building a production-grade web scraping pipeline with integrated CAPTCHA solving — queues, retries, proxy rotation, and monitoring.

Pillar Guide

Pillar Guide

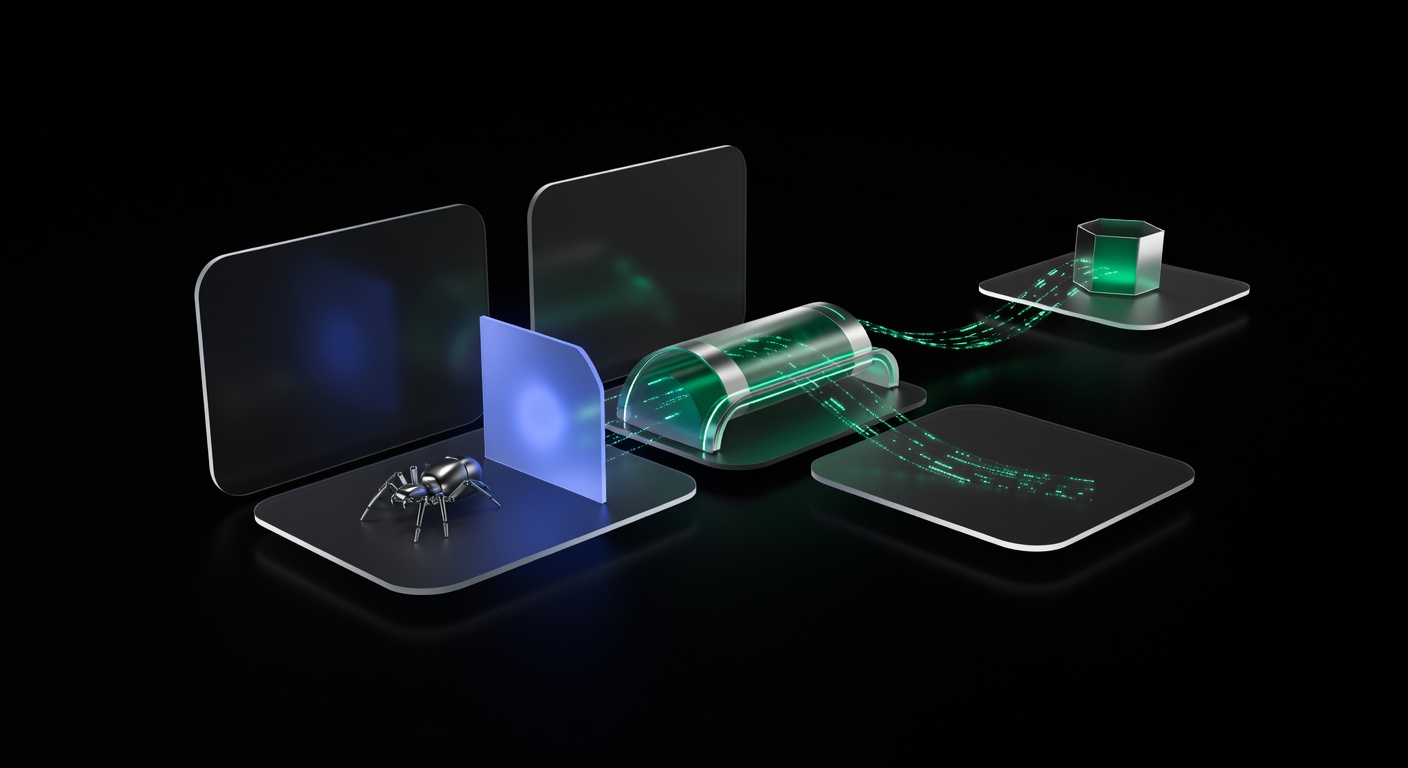

Web Scraping with CAPTCHA Solving: The Complete Guide

How to handle CAPTCHAs in web scraping pipelines — from detecting CAPTCHA types to integrating solver APIs, managing proxies, and building resilient scraping workflows.